[Press release re-shared from the National Education Policy Center // NEPC Publication]

[Press release re-shared from the National Education Policy Center // NEPC Publication]

“Digital technologies used in schools are increasingly being harnessed to amplify corporate marketing and profit-making and extend the reach of commercializing activities into every aspect of students’ school lives. In addition to the long-standing goal of providing brand exposure, marketing through education technology now routinely engages students in activities that facilitate the collection of valuable personal data and that socialize students to accept relentless monitoring and surveillance as normal, according to a new report released by the National Education Policy Center.

In Asleep at the Switch: Schoolhouse Commercialism, Student Privacy, and the Failure of Policymaking, the NEPC’s 19th annual report on schoolhouse commercialism trends, University of Colorado Boulder researchers Faith Boninger, Alex Molnar and Kevin Murray examine how technological advances, the lure of “personalization,” and lax regulation foster the collection of personal data and have overwhelmed efforts to protect children’s privacy. They find that for-profit entities are driving an escalation of reliance on education technology with the goal of transforming public education into an ever-larger profit center—by selling technology hardware, software, and services to schools; by turning student data into a marketable product; and by creating brand-loyal customers.

Boninger points out that “policymaking to protect children’s privacy or to evaluate the quality of the educational technology they use currently ranges from inadequate to nonexistent.”

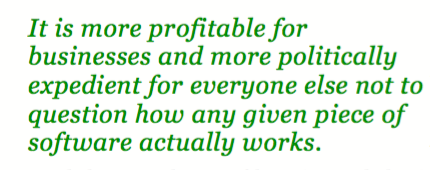

“Schools and districts are paying huge sums of money to private vendors and creating systems to transfer vast amounts of children’s personal information to education technology companies,” explains Molnar. “Education applications, especially applications that ‘personalize’ student learning, are powered by proprietary algorithms, without anyone monitoring how student data are being collected or used.” [Emphasis added]

Asleep at the Switch documents the inadequacy of industry self-regulation and argues that to protect children’s privacy and the quality of their education, legislators and policymakers need to craft clear policies backed by strong, enforceable sanctions.

Such policies should:

- Prohibit schools from collecting student personal data unless rigorous, easily understood safeguards for the appropriate use, protection, and final disposition of those data are in place.

- Hold schools, districts, and companies with access to student data accountable for violations of student privacy.

- Require algorithms powering education software to be openly available for examination by educators and researchers.

- Prohibit adoption of educational software applications that rely on algorithms unless a disinterested third party has examined the algorithms for bias and error, and unless research has shown that the algorithms produce intended results.

- Require independent third-party assessments of the validity and utility of technologies, and the potential threats they pose to students’ well-being, to be conducted and addressed prior to adoption.

Additionally, the report authors encourage parents, teachers, and administrators to publicize the threats that unregulated educational technologies pose to children and the importance of allowing disinterested monitors access to the algorithms powering educational software.”

___________________

Below is a revealing segment of the report describing the the entry of educational technology programs into schools:

“Major players include computer manufacturers and operating system providers: Apple, Google, IBM, and Microsoft. Pearson, an education company best known as a testing giant, is also influential. Facebook, besides being the social media platform of choice for school groups and teams, has partnered with Summit Public Schools, a nonprofit charter school network, to produce its Personalized Learning Platform (PLP), used by at least 100 schools throughout the United States.

All kinds of companies, including entertainment and toy companies, are eager to cash in on education technology mania. Aspiring “ed tech” millionaires gather annually at conferences such as SXSWedu in Austin and at EdTechXGlobal conferences in Europe and Asia, where they develop and share their latest ideas for how to “radically disrupt” education. Rachael Stickland, co-founder of the organization Parent Coalition for Student Privacy, reported that at SXSWedu, speakers encouraged entrepreneurs to get in and make their mark in this ripe and loosely regulated market. “The message at SXSWedu is loud and clear,” she wrote in March 2017. “To win the game, hurry up and get your fair share before the research, evidence and privacy laws catch up to us!” [Emphasis added]

Education administrators are now busy revising policies to facilitate the entry of new software applications for teacher use. In the past, schools followed “request for proposal” processes in which they would evaluate proposals from many different companies before they would decide on a purchase—processes that could take six months to two years. To move things along more quickly, some schools have replaced the proposal process with product implementation demonstrations. Municipalities, such as New York and Chicago, now help match schools with education technology companies. These policies are consistent with the technology industry’s approach of moving to market without extensive product testing. In other words, they encourage schools to use children as test subjects for product development.” [Emphasis added] p. 13

The authors acknowledge the powerful political, financial, and social forces that converge in perpetuating a collective blind faith in untested algorithms that are shaping children’s learning experiences and assessments.

“Scientists who study psychological measurement have a healthy skepticism about the ability to measure such abstract latent variables as “learning,” “understanding,” or “perseverance”—or to predict such things as the likelihood of a child’s dropping out. They understand that any theory of learning or behavior can at best only partially explain or predict students’ outcomes, that any dataset is necessarily reductive and incomplete, and that there is always a certain amount of error involved in any model. And finally, built into their scientific process is open debate about how to best measure, explain, and predict. Sellers of “personalized learning” software and policymakers eager to support innovation and the “radical disruption” of education—and district leaders and teachers under pressure both to work with the business community and to adopt the newest, best technology—are much less likely to wander into these weeds.

Doing so could raise serious doubts about the ability of that software to do what its creators claim it can do, and could significantly delay, if not prevent altogether, a school from adopting it.” (p. 16)

Find Asleep at the Switch: Schoolhouse Commercialism, Student Privacy, and the Failure of Policymaking, by Faith Boninger, Alex Molnar, and Kevin Murray, on the web at: http://nepc.colorado.edu/publication/schoolhouse-commercialism-2017.